Groupthink and how to avoid it

22/01/2020

What is groupthink?

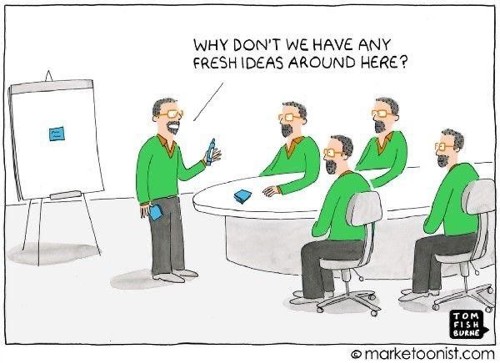

When groups make decisions, they may well take more risks than the individuals themselves would – this is “groupthink”. The term “groupthink” was coined by psychologist Irving Janis in the 70s. Janis defined groupthink as a phenomenon where people seek unanimous agreement in spite of contrary facts pointing to another conclusion.

Groupthink tends to occur when a group strive to reach consensus despite organisational flaws, combined with a high degree of homogeneity of member’s social background and ideology. Extreme pressure or stress can exacerbate the situation.

What are the symptoms of groupthink?

In summary, there are eight symptoms of groupthink to look out for:

- Illusions of invulnerability – creating excessive optimism and encouraging risk-taking.

- Ignoring warnings that might challenge the group’s assumptions.

- Unquestioned belief in the morality of the group, causing members to ignore the consequences of their actions.

- Stereotyping those who are opposed to the group as weak, evil, biased, spiteful, impotent, or stupid.

- Direct pressure to conform placed on any member who questions the group, couched in terms of “disloyalty”.

- Self censorship of ideas that deviate from the apparent group consensus.

- Illusions of unanimity among group members, silence is viewed as agreement.

- Mindguards – self-appointed members who shield the group from dissenting information

What are the consequences of groupthink?

- Incomplete survey of objectives

- Incomplete assessment of alternatives

- Failure to examine risks of the preferred choice

- Failure to re-evaluate previously rejected alternatives

- Poor information research

- Selection bias in collecting information

- Failure to work out contingency plans

Examples of groupthink disasters

Recent examples of business groupthink disasters (and potential Black Swans!) would be Enron, Northern Rock, Lehman Bros, RBS and HBOS where a well publicised story circulated about the HBOS Risk Manager who was “censored” for raising concerns regarding the company’s strategy.

However, much of Janis’ research was based on a series of US foreign policy disasters in the 1960S+70s and in particular, the Bay of Pigs fiasco.

The Bay of Pigs fiasco

- In 1961, approximately 1400 Cuban exiles, helped by the US military, were landed on the coast of Cuba at the Bay of Pigs with the intent of overthrowing the reshime.

- Within three days virtually all were dead or captured. President John F Kennedy approved the invasion based on advice from a “team of experts”. The team made a number of key assumptions that proved to be false e.g.:

- The invasion will trigger an uprising amongst the Cuban population – it didn’t

- There will be no requirement to retreat from the Bay of Pigs after landing – there was; and so on…..

- Janis concluded that the group of experts did not considerer alternative viewpoints on the invasion sufficiently and fought too hard to achieve consensus. JFK accepted their conclusions because they were “experts”.

- Interestingly, when the Cuban missile crisis developed, JFK managed the situation very differently.

Cuban missile crisis in 1962

- When JFK was faced with the Cuban missile crisis in 1962, he seemed to have learned well from the Bay of Pigs.

- During planning meetings, he invited outside experts to share their views, and allowed group members to question them thoroughly.

- He also encouraged group members to discuss possible solutions with trusted members within their separate departments, and he even divided the group up into various sub-groups, to breakdown the group cohesion.

- JFK was deliberately absent from the meetings, so as to avoid pressing his own opinion.

- As we know (because we are all still here!!!), the Cuban missile crisis was resolved peacefully, and the role of these measures has been acknowledged.

Challenger space shuttle disaster in 1986.

- Another classic example of groupthink risk was the Challenger space shuttle disaster in 1986.

- Against the recommendations of individual engineers, the “group” agreed to launch and we all know what happened next.

- Watch this short video which shows explicitly (and shockingly!) how groupthink in the management team contributed to the Challenger disaster http://www.crmlearning.com/groupthink-2nd-edition

How do we avoid groupthink

There are a number of relatively simple but effective ways of avoiding groupthink from an organisational perspective i.e.

- Use external experts to challenge the group thinking

- Set-up multiple groups working on the same issue

- Appoint a “devil’s advocate” for key meetings to test conclusions

- Senior management deliberately avoid expressing opinions before key meetings/projects

How does Assumption Based Communication Dynamics [ABCD] help to avoid groupthink?

When ABCD [https://www.de-risk.com/abcd-risk-management/ ] was originally developed in 1992 it was specifically tailored to avoid the problems that are frequently encountered with traditional risk management techniques. Groupthink was one of the problems considered and is addressed by:

- Interviews – workshops are a terrible way of capturing risks where the group dynamics will allow certain individuals to dominate the discussion while others remain quiet. Interviews ensure that all voices are heard equally and are actually significantly more efficient than workshops for risk identification.

- Assumptions – by focussing on positive assumptions rather than negative risks, all aspects of the enterprise are considered in a positive and systematic way and openness is naturally encouraged. People are led to think about what needs to happen for success (i.e. the assumptions) rather than being forced to look for risks, which is psychologically unnatural.

- Assumption ratings – ABCD operates on a “worst case wins” basis i.e. the person who is most concerned controls the ratings even if they have isolated views. This forces people to communicate so that the minimum “Risk Plan” forces “optimists” to talk to “pessimists”. This will either resolve concerns or identify risk that the majority had missed.

- Top-to bottom integrity – senior management can set overall risk ratings (i.e. Criticality and Controllability) but they are not allowed to change assumption ratings or close risks/assumptions. Risks can only be closed when the assumption originator agrees to down-grade the ratings.

So would the use of ABCD have prevented the Challenger shuttle disaster? Who knows, but the rigorous structure that ABCD imposes would certainly have ensured that all assumptions were evaluated appropriately for risk before the launch was sanctioned.

The Team

Meet the team of industry experts behind Comsure

Find out moreLatest News

Keep up to date with the very latest news from Comsure

Find out moreGallery

View our latest imagery from our news and work

Find out moreContact

Think we can help you and your business? Chat to us today

Get In TouchNews Disclaimer

As well as owning and publishing Comsure's copyrighted works, Comsure wishes to use the copyright-protected works of others. To do so, Comsure is applying for exemptions in the UK copyright law. There are certain very specific situations where Comsure is permitted to do so without seeking permission from the owner. These exemptions are in the copyright sections of the Copyright, Designs and Patents Act 1988 (as amended)[www.gov.UK/government/publications/copyright-acts-and-related-laws]. Many situations allow for Comsure to apply for exemptions. These include 1] Non-commercial research and private study, 2] Criticism, review and reporting of current events, 3] the copying of works in any medium as long as the use is to illustrate a point. 4] no posting is for commercial purposes [payment]. (for a full list of exemptions, please read here www.gov.uk/guidance/exceptions-to-copyright]. Concerning the exceptions, Comsure will acknowledge the work of the source author by providing a link to the source material. Comsure claims no ownership of non-Comsure content. The non-Comsure articles posted on the Comsure website are deemed important, relevant, and newsworthy to a Comsure audience (e.g. regulated financial services and professional firms [DNFSBs]). Comsure does not wish to take any credit for the publication, and the publication can be read in full in its original form if you click the articles link that always accompanies the news item. Also, Comsure does not seek any payment for highlighting these important articles. If you want any article removed, Comsure will automatically do so on a reasonable request if you email info@comsuregroup.com.